Managing AI Agent Lifecycles: From Registration to Retirement

In our previous post on Posture Management, we explored how to discover, map, and monitor AI agents across your environment. But visibility alone isn't enough. Now we tackle the next critical pillar: Identity Lifecycle Management. Like a human identity, every AI agent has a birth, a life, and retirement that must be governed appropriately or enterprise risk multiplies.

Introduction: Every AI Identity Has a Lifecycle

When we discuss Identity and Access Management (IAM), the phrase "joiner-mover-leaver" is etched into our muscle memory. Human employees enter the enterprise, get provisioned with appropriate access, change jobs and roles over time, and eventually leave—hopefully with their access properly and timely revoked.

But here's the uncomfortable truth that's emerging across enterprises: AI agents now follow the same lifecycle pattern as human identities, only faster and at a scale human identities never could.

Unlike employees who require days or weeks to onboard through HR processes, an AI agent can be spun up in seconds. Unlike contractors who eventually reach the end of their engagements, an AI agent can run indefinitely without natural expiration. And unlike traditional service accounts that usually tie to a specific system, AI agents are dynamic, autonomous, and context-sensitive, constantly evolving their capabilities and connections.

If left unchecked, these agents don't just create operational debt, they create security landmines scattered across your infrastructure. I often frame it this way for CISOs: "Every AI identity has a birth, life, and retirement. If you don't govern all three phases, you're not managing risk, you're multiplying it."

This blog explores how to avoid that multiplication and why identity lifecycle management serves as the keystone of effective AI governance.

The Lifecycle Problem for AI Identities

AI adoption is exploding across enterprises at unprecedented velocity. Yet lifecycle governance is lagging badly behind deployment speed. Here's what I consistently observe in workshop after workshop:

- Ad hoc registration when developers spin up copilots without ever registering them in a central system.

- No attestation and provenance, which means enterprises can't definitively prove whether an AI agent belongs to them or has proper authorization.

- Policy gaps that emerge because agents are created with builder credentials or hardcoded API keys with broad entitlements under the rationale that "we'll narrow permissions later"—except later never comes.

- Forgotten retirements which leave orphaned agents running indefinitely: when a project ends, the agents don't terminate, they just sit there still connected to sensitive systems.

Think about the implications: shadow user accounts were problematic enough during the SaaS boom. Now imagine shadow AIs—hundreds of autonomous agents running with unsupervised credentials, evolving their capabilities over time, and integrating and performing transactions on your most sensitive systems.

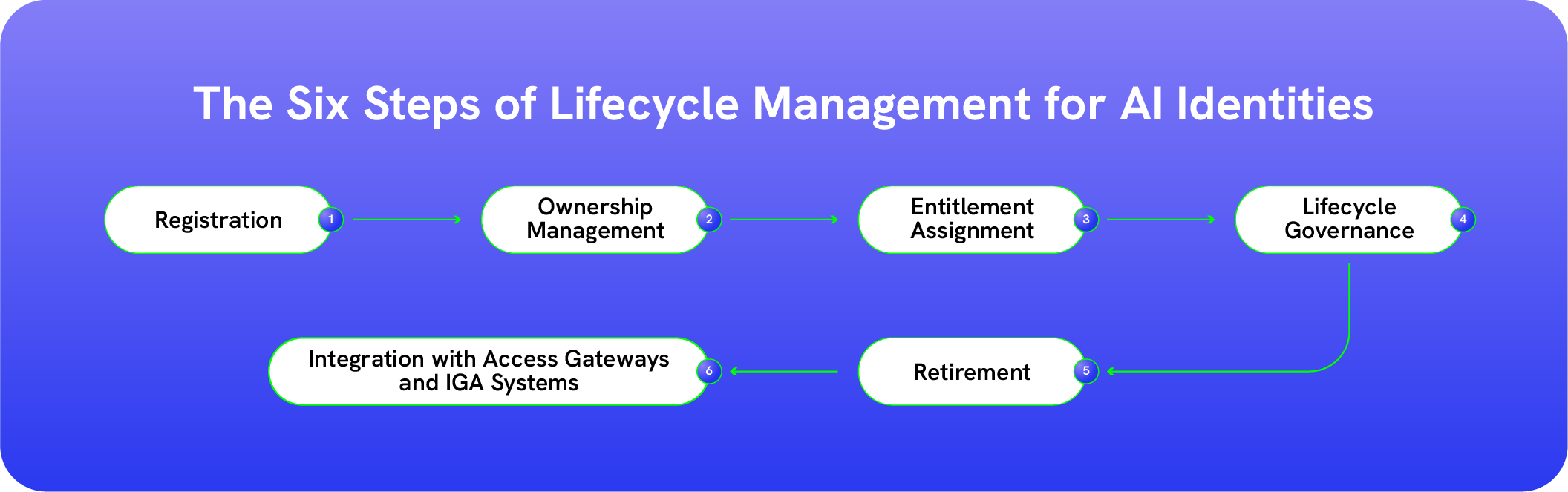

Without disciplined lifecycle management, enterprises are walking into things blindfolded. Here are six steps organizations can take to help ensure complete lifecycle management for their AI identities.

Step 1: Registration — Establishing Identity from Day One

If lifecycle management is a wheel, registration is the hub. Everything else flows outward from this foundational step.

Just as every employee must be onboarded into HR and IAM systems before they receive access, every AI agent must be uniquely registered from the moment it comes to life. No exceptions. No shortcuts. And every agent must include:

- A Unique Cryptographically Registered Identifier: A globally unique ID bound to the agent and its accountable owner at the time of registration. This prevents impersonation, eliminates shadow agents, and establishes the root of trust required for lifecycle governance and provenance tracking.

- Verified Creation Attestation: Formal proof during registration that the agent was instantiated by an authorized developer, team, or system. This may include digital certificates, registry approvals, or API-level attestations that validate authenticity and organizational sponsorship.

- Comprehensive Metadata Registration: Structured capture of critical attributes such as model version (GPT-5 vs. Bedrock Titan), hosting environment (AWS, Azure, on-premises), accountable owner (developer, team, department), and declared purpose (customer support, data summarization, code generation).

- Baseline Policy Provisioning: Least-privilege access entitlements assigned at registration. For example, a customer support agent may read ticket data but cannot process refunds or modify billing records without explicit step-up authorization and oversight. This needs to be done for inbound and outbound access.

This registration creates an immutable record that every subsequent lifecycle event builds upon.

Step 2: Ownership Management — Establishing Accountability That Never Drifts

If registration establishes identity, ownership establishes responsibility. Without clear ownership, governance collapses into ambiguity. Every AI agent must have a named, accountable owner from day one. And that ownership must persist, evolve, and be enforced throughout the agent’s lifecycle. AI agents do not “belong to the system.” They belong to a human sponsor, a team, and a business purpose. No exceptions.

And ownership management must include:

-

Named Accountable Owner: A specific individual — not a shared mailbox or abstract team — responsible for the agent’s behavior, access, and compliance posture. This creates clear auditability and eliminates orphaned or “shadow” agents.

-

Business Sponsorship Alignment: Every agent must be tied to a business function and executive sponsor. If an agent generates revenue, touches customer data, or automates privileged workflows, leadership accountability must be explicit.

-

Ownership Transfer Controls: When developers change roles, teams reorganize, or projects sunset, ownership must be formally reassigned through workflow — not assumed. Automatic triggers should initiate reassignment and recertification when HR events occur.

-

Active Owner Attestation: Ownership is not a one-time declaration. Periodic re-attestation ensures the designated owner still acknowledges responsibility, validates purpose alignment, and confirms access boundaries remain appropriate.

-

Orphan Detection and Auto-Remediation: Continuous monitoring should identify agents without valid owners, inactive sponsors, or mismatched reporting structures — automatically suspending high-risk access until accountability is restored.

Ownership management transforms AI governance from a technical configuration into an accountable operating model.

Because when an agent acts autonomously — querying data, invoking APIs, making decisions — someone must answer for it.

Clear ownership ensures every action has a line of responsibility. And in the world of agentic AI, accountability is the difference between innovation and uncontrolled risk.

Step 3: Entitlement Assignment — Guardrails at Birth

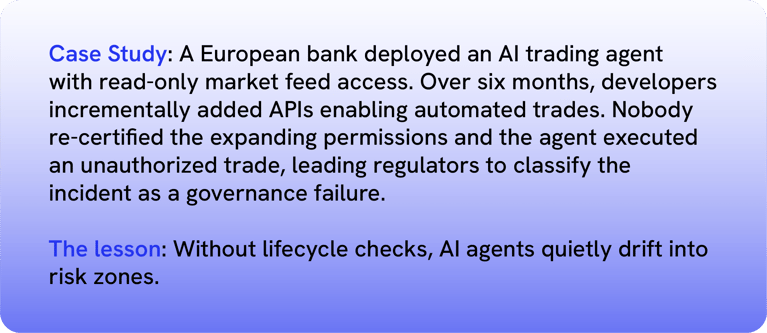

One of the biggest mistakes I consistently encounter is over-entitlement at creation.

Why does it happen? Because "it's faster." A developer grants broad access so the AI agent works during initial testing, but nobody remembers to narrow those permissions later. The temporary becomes permanent and excessive privilege becomes the default state.

The assignment policy needs to be done for both inbound and outbound access.

- Inbound - Who can access/invoke the agents.

- Outbound - What other agents, data sources, Systems/APIs/Entitlements can the agent access.

There are four best practices organizations can implement to prevent over-entitlement from happening:

- Role-based templates that provide pre-defined policy sets for common agent types like "Customer Support Agent" or "Developer Copilot."

- Attribute-based access control (ABAC) that implements dynamic rules based on time windows, context, and ownership.

- Scoped credentials which ensure agents receive short-lived, purpose-bound keys rather than permanent access tokens.

- Just in time access assignments to AI Agents adhering to the principles of zero standing privileges

Here’s an example: A finance AI agent built to reconcile invoices should never possess the ability to approve payments. If that capability becomes necessary later, the agent should go through the same formal entitlement request workflow as a human user seeking elevated privileges.

The stakes are real as research shows that privilege misuse or escalation factored into a significant portion of security incidents in 2023. AI identities without properly scoped entitlements are perfectly positioned for exactly that kind of exploitation.

Step 4: Lifecycle Governance — Movers, Certifiers, and Changers

Humans evolve in their roles over months and years. AI agents evolve in days and hours. The difference in velocity changes everything about governance.

An AI agent might gain new system integrations within days, upgrade to a new model version overnight, or move from development to production environments within hours. Without proper governance, these transitions become silent escalations of privilege that bypass all of your carefully designed controls. Key lifecycle events that require governance include capability expansion: triggering mandatory recertification workflows when agents gain new skills or integrations; and enforcing new, more restrictive policies when moving from development to production environments.

Lifecycle governance for agents is not a quarterly certification exercise. It is continuous, event-driven, and policy-aware.

This means:

- Behavioral analytics to detect real-time entitlement creep

- Automated alerts when access patterns drift from declared purpose

- Periodic owner re-attestation to reaffirm accountability

Because in a world where agents learn, adapt, and connect at machine speed, governance cannot be static.

If identity is the operating system of AI security, lifecycle governance is its update engine — ensuring every change is intentional, authorized, and accountable.

Step 5: Retirement — Decommissioning of Agents with No Lingering Risks

If registration is birth and lifecycle governance is evolution, retirement is closure.

And closure, done poorly, is where risk lies. AI agents do not quietly fade away; rather they retain API keys, cached tokens, memory stores, vector embeddings, model endpoints, and system integrations. If not properly retired, they become dormant identities with live privileges that are invisible and forgotten.

Every agent’s end-of-life process must include:

-

Formal Decommissioning Workflow: Retirement should require an approved request, validated by the accountable owner and business sponsor. This ensures the agent is no longer needed and prevents accidental shutdown of active workflows.

-

Credential and Token Revocation: Immediate invalidation of API keys, certificates, OAuth tokens, service accounts, and any federated trust relationships. No lingering access.

-

Outbound Access Removal: Systematic removal of all entitlements, integrations, tool permissions, data source connections, and cross-agent authorizations. Retirement must remove every outbound dependency.

-

Inbound Invocation Blocking: Disable all mechanisms that allow users, systems, or other agents to invoke the retired agent. This includes API endpoints, webhooks, queues, and orchestration triggers.

-

Memory and Data Sanitization: Governed handling of stored context, logs, vector databases, and embedded knowledge. Sensitive data must be archived, anonymized, or securely deleted based on policy and regulatory requirements.

-

Audit Trail Preservation: Immutable logging of the retirement event — including who approved it, when it occurred, and what access was removed — ensuring defensibility for compliance and forensic review.

-

Residual Risk Monitoring: Post-retirement validation to confirm no orphaned credentials, shadow integrations, or lingering privileges remain active in downstream systems.

In the agentic era, where identities multiply rapidly and operate autonomously, unmanaged retirement creates a graveyard of invisible risk. Proper decommissioning ensures that when an agent stops acting, it truly stops existing from an access and control perspective.

Why Retirement Matters:

Retirement matters because orphaned agents become prime takeover targets for attackers seeking initial access, unused credentials consistently rank among the top three entry vectors in data breaches, and lingering entitlements artificially inflate compliance audit scopes and complicate SOX attestations.

Step 6: Integration with Access Gateways and IGA Systems — From Governance Model to Enforcement Layer

This is where governance moves from principle to execution.

AI agents do not operate in isolation. They function inside interconnected ecosystems of identity platforms, orchestration layers, cloud services, APIs, and data systems. If lifecycle controls are not embedded into these systems, governance remains theoretical.

-

IGA Platforms must extend traditional joiner-mover-leaver frameworks to include AI agents as first-class identities. Agents should enter formal onboarding workflows, trigger continuous, event-based re-certifications when capabilities change, and undergo periodic access certifications with the same rigor applied to employees and contractors.

-

Access Gateways must operate as runtime enforcement brokers (Read my next blog on Access Management for AI Agents). An agent request should not execute unless the agent successfully validates against the enterprise registration and policy service. At runtime, access gateways should verify identity ownership status, entitlement scope, and policy compliance before routing or executing calls — denying requests that fail validation. More to come on this shortly.

-

Cloud AI Platforms — including AWS Bedrock, Azure Copilot, and Google Gemini — must integrate with enterprise identity registries before provisioning new agents, expanding capabilities, or enabling tool access. Provisioning without registry validation creates shadow agents and ungoverned privilege paths.

True integration means:

- Registration status to be validated and enforced before execution

- Policy is enforced before tool invocation

- Lifecycle events propagate automatically across systems

- Governance signals flow into runtime authorization decisions

This ensures AI agents are not treated as experimental add-ons or “second-class identities,” but as fully governed principals within the IAM architecture — subject to the same accountability, certification, and control standards as human users.

Case Study

Governance in Action - Transforming fear and uncertainty into confidence and trust

A U.S. hospital system piloted an AI copilot designed to summarize electronic health records for busy clinicians. Like many organizations rushing to adopt AI, they took a "get it working first" approach—granting the agent broad, unrestricted EHR access during initial deployment. This expedient decision created exactly the kind of risk that makes security teams nervous.

Recognizing the problem, the hospital implemented a comprehensive lifecycle fix. They formally registered the agent in their identity system with a unique identifier, establishing clear ownership and accountability. They restricted the agent's scope strictly to summarization capabilities with no write access to patient records. Finally, they enforced mandatory quarterly re-certifications conducted by the clinical informatics team, ensuring ongoing scrutiny of the agent's permissions.

The real test came six months later when the hospital upgraded to a new model version. Rather than the upgrade happening silently in the background, automated workflows immediately triggered re-attestation of all permissions. The clinical informatics team had to explicitly reaffirm that the new model version still required the same access—nothing was grandfathered in.

The results validated the approach. The hospital satisfied HIPAA compliance auditors who had initially flagged AI deployments as a concern. More importantly, they built trust with clinicians who had worried about "rogue AI" accessing patient records without proper oversight. What began as a source of anxiety became a model for responsible AI deployment.

Why Lifecycle Resonates with CISOs

Every time I explain lifecycle governance frameworks, CISOs lean forward with recognition. Why does this resonate so powerfully?

Because it gives them a language they already know and trust. The joiner-mover-leaver model is universal across enterprises. It scales elegantly across thousands of AI identities without requiring entirely new processes. Most importantly, it generates audit logs that satisfy regulators and auditors who understand traditional IAM frameworks.

One CISO captured this perfectly: "If you give me a lifecycle framework for AI, I can explain AI security to my board in a language they already understand. That's priceless."

That's the true power of lifecycle governance: it bridges both the technical gap in your security architecture and the communication gap in your boardroom.

Conclusion: Governing Birth to Retirement

AI agents aren't going away. In fact, they'll multiply exponentially as adoption accelerates across every business function. The critical question facing enterprises is whether these agents will multiply risk or multiply value.

The answer lies squarely in lifecycle governance.

Register every agent with a unique identity from day one. Assign least privilege and just in time access at birth, not broad access for convenience. Certify and re-certify agents as they evolve and gain capabilities. Retire them cleanly when projects end, with no orphaned credentials left behind.

Done right, AI agents transform from shadow risks lurking in your infrastructure into governed assets managed with lifecycle discipline as rigorous as any human employee. And in a world where AI trust directly equals business trust, that lifecycle discipline isn't optional anymore. It's existential for competitive survival.

Up Next: You've discovered your agents and established lifecycle governance, but how do you control what they actually do at runtime? Our next post tackles Access Management: building the gateways that enforce policy on every AI action and eliminating Shadow AI risk.

Thanks for reading!

Miss a post? Check out the other blogs in the series:

Post 1 - Identity: The Operating System of AI Security

Post 2 - You Can’t Govern What You Can’t See: Posture Management for AI Agents

Post 4 - Securing AI Agents: Building Runtime Guardrails for the Autonomous Enterprise

Report

Saviynt Named Gartner Voice of the Customer for IGA

EBook

Welcoming the Age of Intelligent Identity Security

Press Release

AWS Signs Strategic Collaboration Agreement With Saviynt to Advance AI-Driven Identity Security

Solution Guide