As AI agent adoption picks up, security teams are running into an access problem they know well, just in a different shape. Agents connect to internal systems through APIs, databases, and third-party tools. And they get credentials to do it. Those credentials are frequently longer-lived or more broadly scoped than the task requires. In many setups, access is established once at configuration and never rechecked during execution. The agent keeps calling tools and pulling data with no ongoing validation of whether it should still be doing so.

What this creates is a growing set of persistent, automated pathways into sensitive systems. These pathways are authenticated and always on, but they often aren't subject to the monitoring, least-privilege controls, or runtime checks you’d hope for in a well-run security program. This means that many AI agents operate with standing privileges. They hold access that stays active whether or not it's needed, and nobody's governing it by default.

Key concepts

- Standing privileges give autonomous agents persistent, ungoverned execution paths that traditional monitoring cannot contain.

- Over-permissioned AI agents increase breach likelihood. Organizations with excessive agent permissions see up to 4.5x more security incidents.

- Zero Standing Privileges (ZSP) is the baseline for agentic AI. Task-scoped, just-in-time access collapses the exploitable window from indefinite to near zero.

- Runtime access control governs what agents do during execution, which is the layer most organizations are missing.

What does standing privilege mean for AI agents?

Standing privileges mean access stays active whether or not the agent is performing a task, creating ungoverned execution paths by default. Unlike human users who operate in sessions, agents operate in execution graphs. An agent can initiate actions, invoke tools, and traverse systems whenever its internal planner decides to.

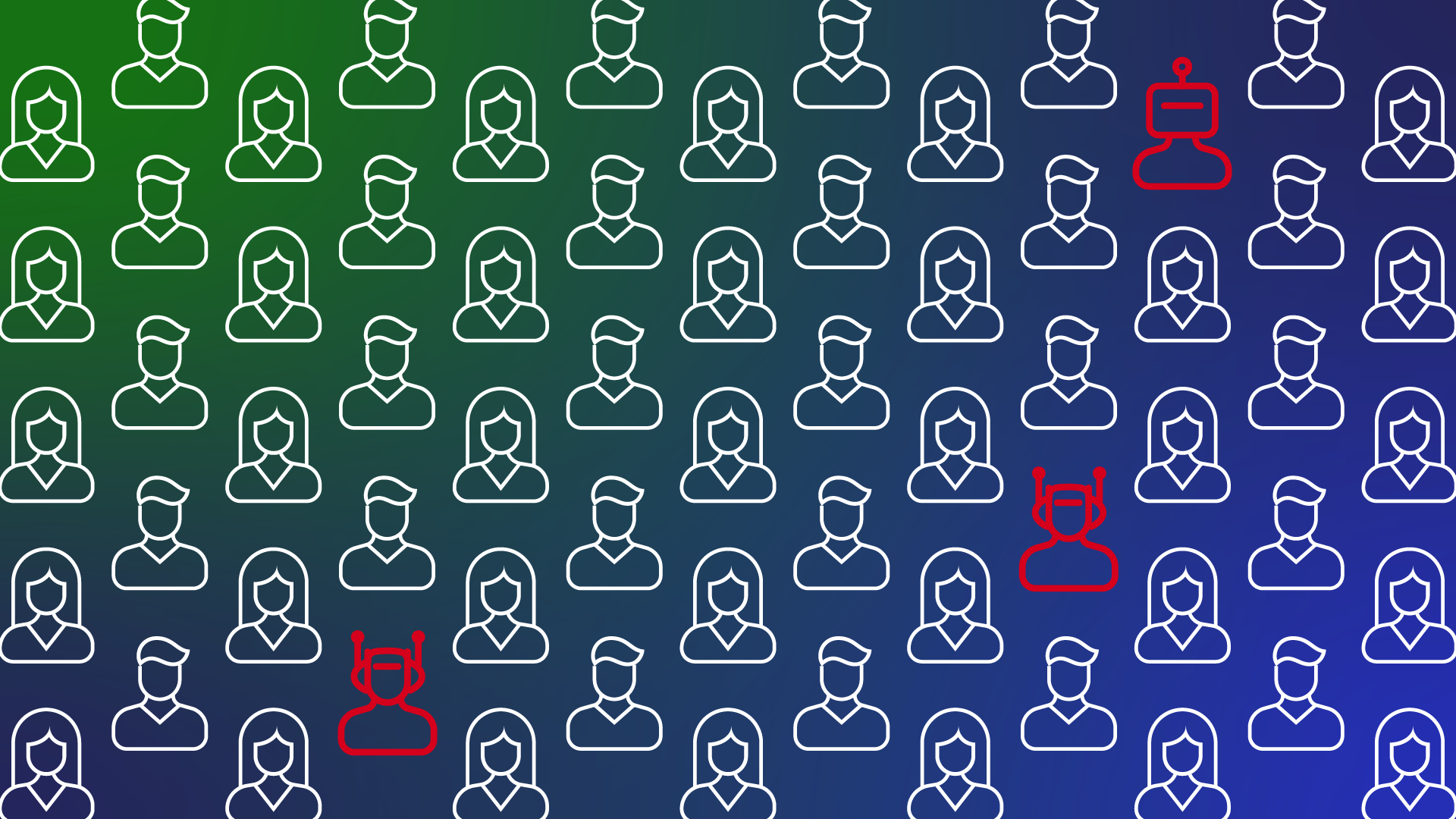

That risk compounds when teams over-provision agents at creation. A Teleport report found that enterprises granting excessive permissions to AI systems experience 4.5 times as many security incidents as those enforcing tighter controls. Of the security professionals surveyed, 59% reported experiencing an AI-related security incident.

Why AI agents are still given standing privileges

AI agents retain standing privileges because identity infrastructure hasn't kept pace with agentic AI. Most enterprises still rely on identity and PAM systems designed for human-speed approvals rather than machine-speed execution. Development and business teams deploy agents quickly to solve immediate problems, and access decisions made at creation time have no end date. Without identity systems that can provision, validate, and revoke access at the speed agents operate, organizations default to broad, persistent roles. Agents end up over-permissioned, operating autonomously with limited visibility and no mechanism to take that access away when the task ends.

What happens to overpermissioned AI agents when their projects end?

When those over-provisioned agents outlive their projects, they often become zombie agents—orphaned identities with valid credentials, active permissions, and no human owner. Without proper governance, the agent's identity lifecycle doesn't end when the project does. An agent from a project that ended three months ago could still hold the same database access and API keys it received on day one. If a threat actor compromises it, they inherit everything. And because the agent's credentials are valid, its activity looks legitimate to most security tools.

How zero standing privileges work for AI agents

Zero standing privileges (ZSP) is a security model in which no identity, whether human, machine, or AI agent, retains persistent access to any system. The system provisions access dynamically, scopes it to a specific task and duration, and automatically revokes it at completion.

Under ZSP, an AI agent requests access with a defined scope, and a policy engine evaluates the request in real time, checking what the agent is trying to do and how long it needs to do it. If approved, the agent receives time-bound permissions for that task alone, and the system automatically removes the permissions after it is done. If an agent's credentials are stolen between tasks, there is nothing to exploit because no access exists to inherit. Just-in-time access (JIT) for agents requires an identity platform capable of provisioning and revoking access at the speed agents operate, not on the human timescales that legacy PAM tools were built for.

Why runtime access control matters more than design-time policy

ZSP removes persistent access between tasks. Runtime access control addresses what an agent does with its access during a task.

AI agents chain tools through protocols like Model Context Protocol (MCP) and delegate to other agents via agent-to-agent (A2A) communication. They execute actions faster than any human reviewer could approve. MCP connections are themselves access paths that security teams need to govern. With MCP-related vulnerabilities continuing to surface, unmonitored server connections are turning into entry points for lateral movement. A static policy that was correct at provisioning time can become irrelevant seconds later when an agent's execution plan shifts from its original intent.

Gartner reinforces this point. By 2030, 50% of AI agent deployment failures will stem from insufficient runtime controls. Design-time controls are necessary, but they are not enough on their own. Access decisions must happen in real time, at the point of action.

This is the problem that an agent access gateway solves. Rather than trusting an agent based on its initial credentials, a runtime gateway evaluates every request against policy before the action executes. If an agent attempts to access a system or perform an action outside its approved scope, the gateway blocks the request before any damage occurs.

Where this leaves security leaders in 2026

The gap between agent deployment and agent oversight governance is widening. Gartner also projects that 40% of enterprise applications will include task-specific AI agents by the end of 2026, up from less than 5% in 2025. Agent deployment is accelerating faster than the systems designed to manage it.

This governance gap can be closed in three steps:

- Eliminate standing access. Discover every agent identity, assign human ownership, and remove persistent privileges.

- Enforce task-scoped permissions. Provision access just in time, scope it to individual tasks, and revoke it automatically at completion.

- Require runtime validation. Evaluate every agent action against policy before execution, not after.

Every standing privilege that exists today is an ungoverned execution path for an autonomous system. The question is whether your team will remove them proactively or wait for an incident to force the decision.

Frequently asked questions about AI agent privileges and access control

Your next read: Managing AI Agent Lifecycles: From Registration to Retirement.

-

https://goteleport.com/about/newsroom/press-releases/2026-state-of-ai-in-enterprise-security-report/

-

https://thehackernews.com/2026/04/anthropic-mcp-design-vulnerability.html

-

https://www.gartner.com/en/newsroom/press-releases/2026-03-11-gartner-announces-top-predictions-for-data-and-analytics-in-2026